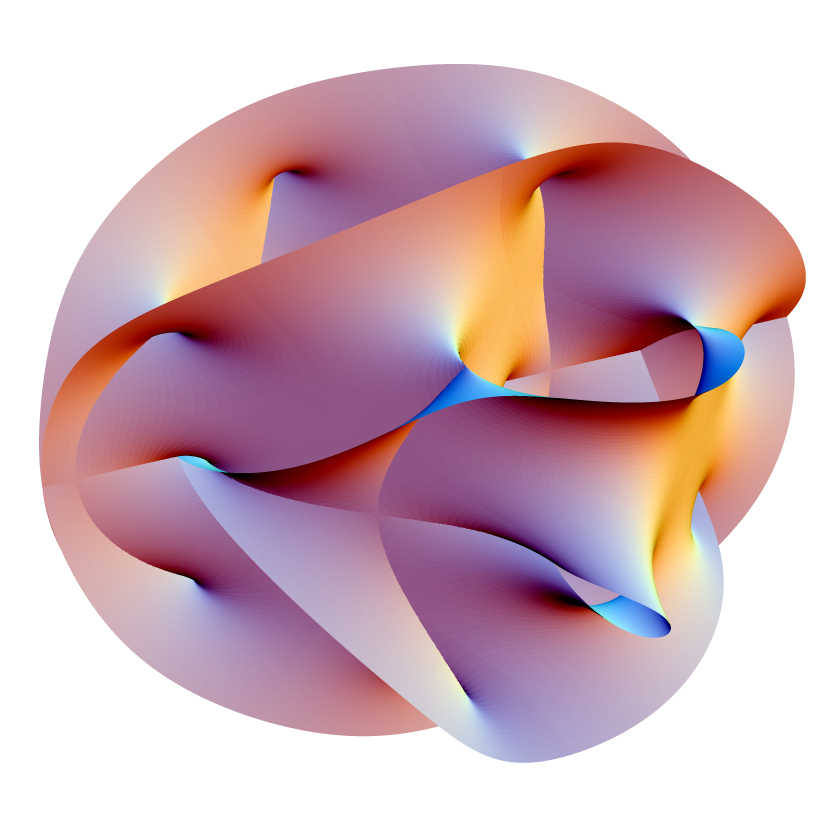

A 2D slice of a 6D Calabi–Yau quintic manifold. Visualization by Andrew J. Hanson via Wikimedia Commons.

What Twelve Years in Physics Taught Me About AI Hardware

The question I kept asking in graduate school was not really about strings, or extra dimensions, or the landscape. It was which configurations survive in a vast space of possibilities, and why. That turned out to be the question underneath AI hardware.

The question I kept asking in graduate school was not actually about strings, or extra dimensions, or the landscape. The real question, the one underneath all of it, was this: in a space with an astronomical number of possible configurations, which ones survive, and why?

It turns out that is also the question underneath AI hardware, although most of the people building AI hardware have not noticed.

In 2006 I wrote a paper with Tom Banks and B. Fiol, published in the Journal of High Energy Physics. Banks is one of the architects of M-theory and of the BFSS matrix model. The paper was about de Sitter space, the geometry of a universe with a positive cosmological constant. It is the geometry of the universe you are currently sitting in.

The result we conjectured is strange enough that I still think about it almost every day. You can construct a quantum theory of de Sitter space in which both particles and the black holes inside them emerge, exactly, as excitations of a finite collection of fluctuating discrete elements. Not a continuous quantum field. Not an infinite Hilbert space. A finite, discrete system whose collective configuration encodes the geometry of spacetime itself. The shape of the space is the computation.

In 2021, Susskind extended the result to large black holes and found that the rates of exponentially rare fluctuations calculated from the discrete model matched the gravitational calculations in surprising detail. He called the original paper seminal. The finite-element description was not just a mathematical curiosity. It computed the right physics, because the geometry was always an emergent property of the discrete structure underneath.

I spent the next several years on the same theme, with Michael Dine and others, in different language and at different scales. Moduli stabilisation. Metastable vacua. Moduli decays and gravitinos. The fate of nearly supersymmetric states. Every paper was a different angle on the same question. In an enormous configuration space, what survives?

The answer, when I finally stopped trying to dress it up, was this: the physics filters the space for you. Most configurations are unstable, decaying rapidly into things that are boring or dead. The metastable ones, the ones that survive for cosmologically long times, are rare and special. The landscape does not require an external selection rule. The dynamics select. And once you accept that, the apparent crisis of "too many vacua" inverts. The surviving structure is what physics is doing on its own.

What this has to do with AI hardware

I left academia in 2010 and spent the next sixteen years building AI companies, in advertising technology, finance, cybersecurity, and biotechnology. The mathematics never went away. A learning system is a vast configuration space, mostly unstable, with a small number of metastable states that survive. Training is the search for those states. Inference is the act of running inside one. The whole machinery of modern artificial intelligence is, at the bottom, statistical physics with brand names attached.

So here is the part that took me almost a decade to see clearly, and I will spell it out because the connection is not obvious from outside the field. The mathematics that AI is actually doing, probability and stochastic dynamics and the slow filtering of a configuration space, is the same mathematics the physicists wrote down a hundred years ago. The silicon that AI runs on is not. Digital logic was designed for branches and Booleans, the work of databases and operating systems and word processors. The probabilistic workload was never in the original specification for the transistor. It was grafted on, simulated through arithmetic, and the cost of that simulation has now become the dominant cost of running anything resembling a frontier model. Roughly eighty percent of the energy on a modern GPU is spent moving data rather than computing with it. The world already needs about ten times more inference than the existing architecture can physically build. None of this is going to be repaired by making the next generation of chips a little smaller and a little faster. The architecture is the wrong shape.

The deeper claim, the one that made me leave the comfortable cycle of building yet another AI company on top of someone else's silicon, is that the workload was always physical. A system fluctuating around an energy landscape is computing something. It does not need a GPU to interpret it for the system to be doing the work. The mathematics of the universe I studied and the mathematics of the AI systems I built are the same mathematics at different energy scales. Once you see it, the question of what to do about the inference bottleneck stops being a question about transistors and starts being a question about whether you are willing to use the physics or fight it.

We are using it. The rest is what we are building.

Further reading

- Banks, Fiol, Morisse, arXiv:hep-th/0609062 (2006).

- Susskind, arXiv:2109.01322 (2021).

- Dine, Festuccia, Morisse, Sun, arXiv:hep-th/0612189 (2006). Moduli stabilisation.

- Dine, Festuccia, Morisse, arXiv:0712.1397 (2007). Metastable vacua.

- Dine, Kitano, Morisse, Shirman, arXiv:hep-ph/0604140 (2006). Moduli decays and gravitinos.

- Dine, Festuccia, Morisse, arXiv:0901.1169 (2009). The fate of nearly supersymmetric states.

- BFSS matrix model — the matrix-mechanical formulation of M-theory that underlies much of this line of work.

- Calabi–Yau manifold — the geometries that compactify the extra dimensions of string theory.