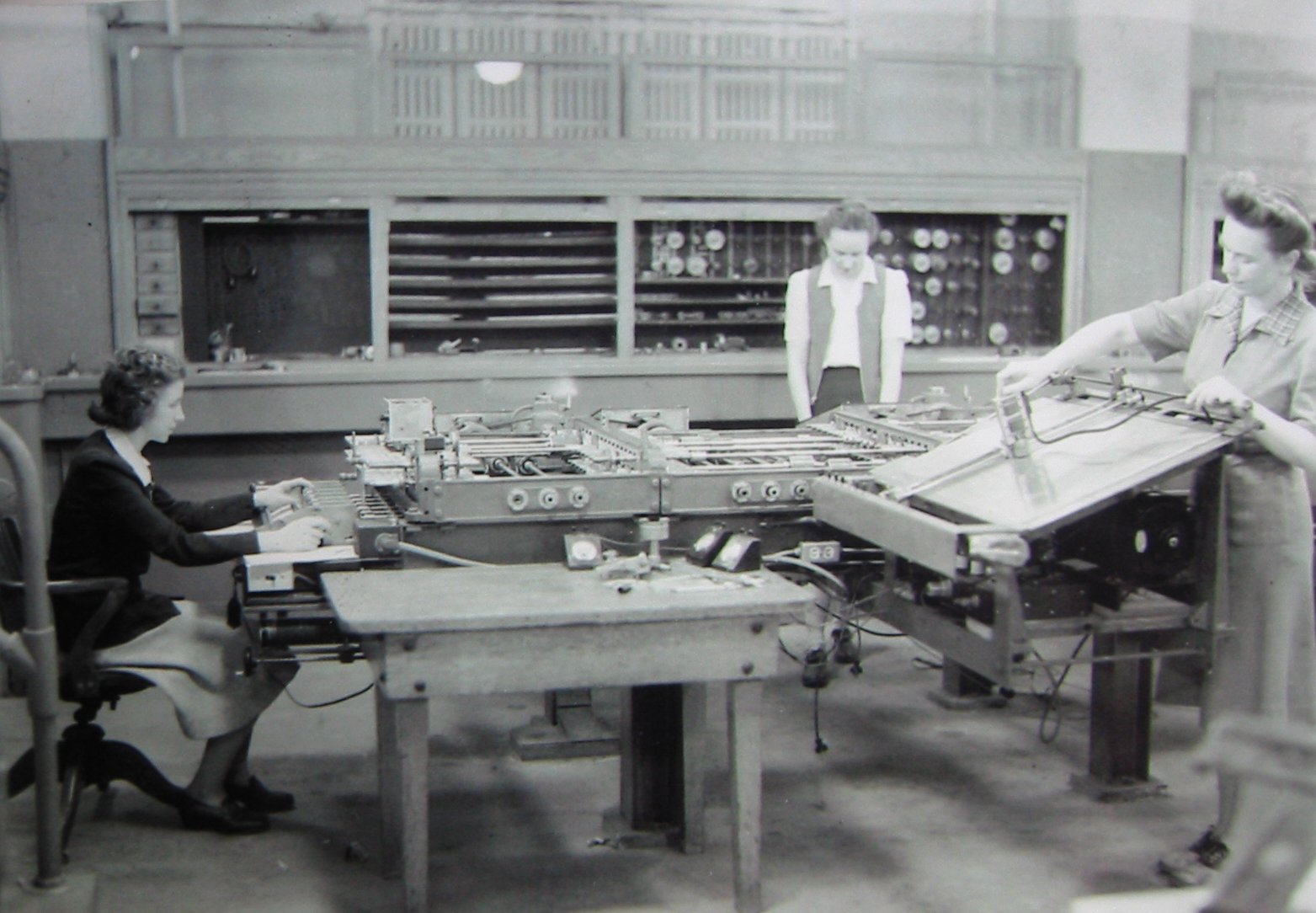

Kay McNulty, Alyse Snyder, and Sis Stump operate the differential analyser at the Moore School of Electrical Engineering, Penn, c. 1942–45. Public domain via Wikimedia Commons.

The Other Computer

The decision was correct. It is also expiring.

There is nothing inevitable about the computer in front of you. It is the descendant of a single decision made in the late 1940s, really the only decision that was thinkable at the time, when logic was supreme and probability had not yet found its way into silicon. The decision was this: a machine that thinks in ones and zeros can be shipped, sold, and reasoned about by anyone who reads the manual, while a machine whose answer drifts a little each time cannot. The first kind became an industry, and the second became a museum. Almost everything that has happened in the seven decades since is the working out of that single decision.

The decision was correct. It is also expiring.

A normal computer works by flipping little switches. Each switch is a transistor charging and discharging a tiny capacitor, which costs about ½CV² of energy per flip, and the chip does this trillions of times a second, in long sequences, to run a program. Spreadsheets, search engines, operating systems, and video calls all need that kind of determinism, and a chip built around switching gives it to them cleanly and cheaply. Then artificial intelligence arrived, the workload turned probabilistic, and the cost of running probability on a substrate built to flip deterministic switches is now a multi-billion-dollar tax every hyperscaler carries on their capex slides.

This is the story of the other computer. We did not build it in the 1940s because no one would buy a machine whose answers drift. In the AI age, where the workload is built on probabilities, we are building a computer that runs that mathematics rather than approximates it.

The track that won

What made the digital track win was not the transistor, it was the abstraction the transistor enabled. A transistor switching between two voltage levels is, in effect, a clean Boolean, and every layer of digital computing builds on that one fact. Gates compose them, registers store them, adders chain them, memory hierarchies cache them, compilers turn programs into streams of them, operating systems orchestrate them, networks ship them across continents. The whole ringing tower of modern computing is a tower of Booleans.

Booleans do not care about noise. A transistor can wobble a millivolt and the answer is still the answer; a transmission line can pick up interference and the receiver throws it away at the threshold. The trade was that you gave up everything in between, and in exchange you never had to worry about anything in between.

This compounded along every axis at once: faster clocks meant more Booleans per second, smaller transistors meant more per chip, fatter memory buses meant more per fetch, and every level of the system was tuned for forty years to do exactly one thing, which was to switch noiseless Booleans as cheaply and as quickly as possible. By 2024, a single GPU could perform tens of trillions of them per second.

Forty years of compounding margin came out of that one substitution.

The other track

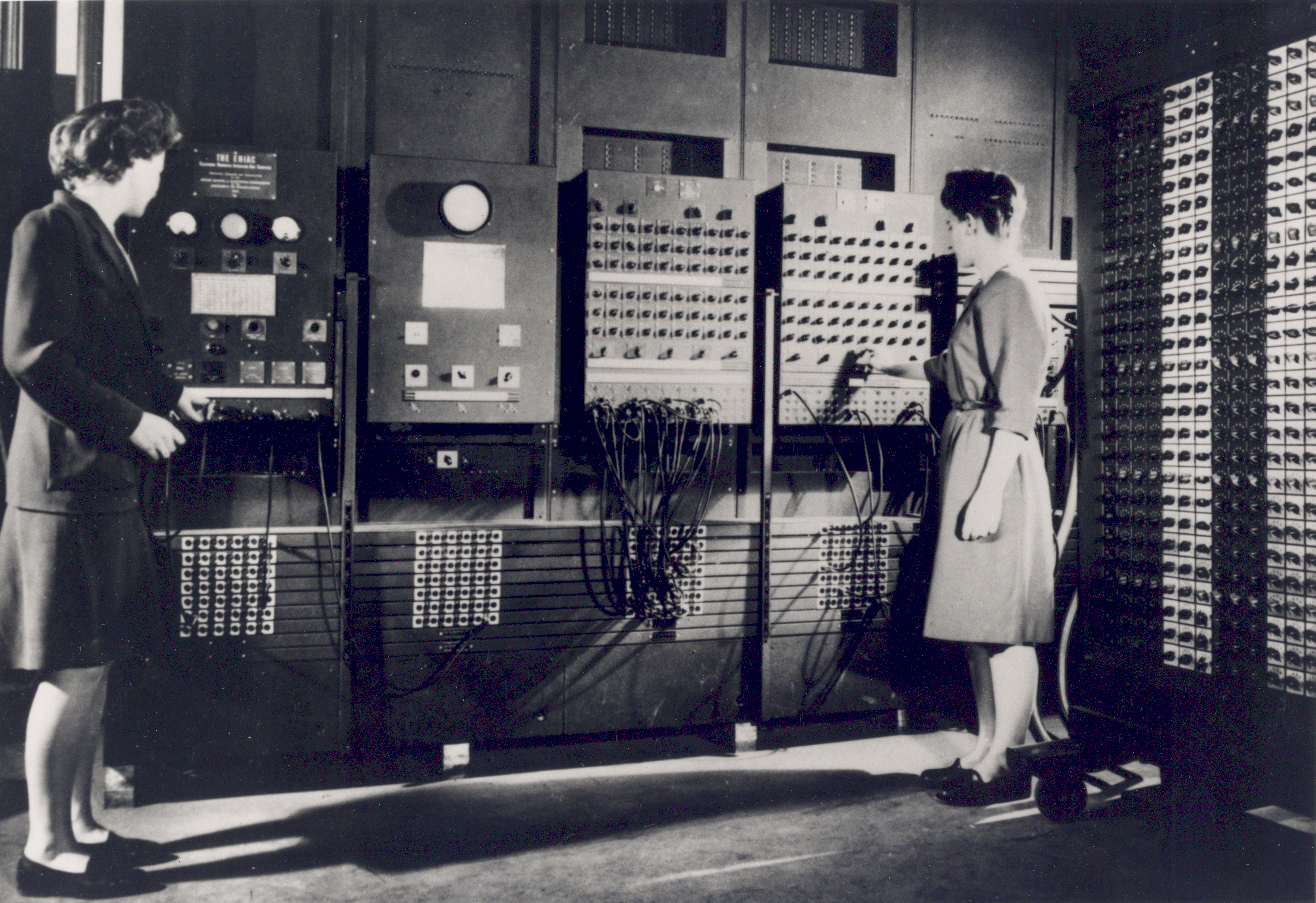

While the digital track was being built, a quieter parallel track was being built next to it. The first analog computers, electromechanical and then electronic, predate ENIAC. Vannevar Bush's differential analyser at MIT in 1931 solved differential equations by mechanical integration. The Bell Labs M-9 gun director, used during the Second World War, controlled anti-aircraft batteries through analog feedback. Through the 1950s and 1960s, analog and hybrid computers ran missile guidance, flight simulators, and chemical-process control. They were not toys. They flew planes.

The thing the analog track had, that the digital track did not, was that its computation was physics. A circuit summing currents was performing arithmetic by Kirchhoff's law, with no clock, no instruction stream, and no abstraction layer. A circuit integrating a voltage was performing calculus, in real time, exactly. The energy cost of an analog operation was, fundamentally, the energy cost of nudging an electron the right way, which was orders of magnitude lower than the cost of fetching a Boolean from memory and running an arithmetic logic unit on it.

The analog track had two problems. The first was noise. An analog computer that drifted a millivolt computed a slightly wrong answer. The second was generality. A given analog circuit solved a given equation, and reprogramming it meant rewiring it. The digital track, which threw noise away and reprogrammed by changing software, scaled along axes the analog track did not.

By 1980 the trade was effectively over. Analog computers were museum pieces. Hybrid systems survived in narrow industrial niches. The mainstream of the field consolidated, completely, around the digital substrate.

The neural detour

The analog track did not quite die. In the 1980s it found strange new advocates.

In 1982, John Hopfield, a Caltech physicist who had spent the previous twenty years in molecular biology, wrote down a network of binary units coupled by symmetric weights whose deterministic dynamics carried it inevitably to a low-energy minimum. He framed it explicitly in the language of statistical mechanics, with reference to spin glasses, and the consequence was the kind of reframing the field rarely permits itself: a physical system, given the right couplings, computes by settling. The minimum was the answer; the relaxation was the computation.

Three years later, David Ackley, Geoffrey Hinton, and Terry Sejnowski generalised Hopfield's deterministic relaxation into a stochastic one and gave it a learning rule. The Boltzmann machine was a network of binary nodes coupled by symmetric weights that computed by reaching thermal equilibrium, and trained by taking a ratio of correlations between two equilibria, one with input clamped and one free. The whole machinery was statistical mechanics applied to learning, and the promise was that a system could not only compute by settling but learn what to settle into.

Around the same time, at Caltech, Carver Mead and his students were building analog vision chips that ran neural computation directly in subthreshold transistors, at a thousandth of the power of the digital alternatives. Misha Mahowald, a graduate student in Mead's lab, built the silicon retina in 1988, and Richard Lyon and Mead built the silicon cochlea the same year. The chips could see and hear, consumed almost no energy, and were beautiful pieces of work.

Nobody bought them.

In 1989, Intel announced the 80170 ETANN, the Electrically Trainable Analog Neural Network, and began shipping the part the following year. It contained sixty-four neurons and ten thousand two hundred and forty synapses, performed two billion connections per second, and consumed about a watt and a half. It could recognise handwritten digits, classify sonar returns, and track targets in missile seekers, and the Department of Defense bought some.

What the ETANN could not do was learn. The weights had to be calculated externally on a conventional computer and then burned into the chip's floating-gate memory, and within a few years the part was quietly discontinued, on the grounds that Moore's Law was doubling transistor density every eighteen months and brute-force digital would inevitably catch up.

The Boltzmann machine ran into a deeper problem before the abandonment came. The 1985 learning rule of Ackley, Hinton, and Sejnowski required sampling from the network's equilibrium distribution twice per update, once with the data clamped at the visible nodes and once with the network running freely, and taking the difference of the resulting correlations. To get an unbiased gradient, both phases had to actually reach the stationary distribution between samples, which took an unbounded number of stochastic steps and scaled badly with the number of nodes. On the digital hardware of the late 1980s, training a Boltzmann machine of any non-trivial size took longer than any practical experiment would tolerate, while a backpropagation network could solve the same task in hours.

The community's response came in two parts, mostly through Hinton's group, and it took roughly two decades to play out. The first part was architectural. The Restricted Boltzmann Machine, a bipartite Boltzmann machine with no within-layer connections (originally introduced by Paul Smolensky in 1986 as the Harmonium), was rediscovered as the right substrate. The bipartite structure means that, given the visible layer, all hidden units are conditionally independent, so a single sweep of Gibbs sampling can update an entire layer in parallel.

The second part was an algorithmic shortcut, and it is worth getting the direction of the trade right because the popular intuition has it backwards. The original 1985 learning rule required running the Markov chain to equilibrium for both the clamped and free phases, and that was the bottleneck. Contrastive divergence does not approximate the equilibrium gradient faster, it optimises a different gradient that you can compute without ever reaching equilibrium. In 2002, Hinton showed that for the narrow purpose of pre-training a single Restricted Boltzmann Machine layer, you can start the Markov chain at a data sample, run it for only a handful of Gibbs steps, and read off the negative-phase statistics from where the chain happens to be. Read the 2002 paper now and the apology is on the page: the trick is described as a trick, and the fact that the estimate is biased, and optimises a different objective from the true log-likelihood, is admitted in the same paragraph in which it is proposed. Persistent variants (Tieleman, 2008) and parallel-tempering schemes were developed afterwards because the basic procedure had real failure modes, including dropped modes and chains that simply oscillate. But for that one job, pre-training one RBM layer as initialisation for a deeper network, the workaround was fast enough to ship, and that narrow shortcut was the foothold the deep learning revival needed.

By the mid-2000s the same group could stack Restricted Boltzmann Machines into Deep Belief Networks. Training a deep stack jointly was intractable for the same sampling reasons that motivated contrastive divergence in the first place, so the workaround was greedy. You trained the bottom RBM with contrastive divergence, froze its weights, treated its hidden activations as the visible data for the next layer, and repeated up the stack. The layer-by-layer training was a scaffolding to get usable weights into a deep network without ever having to sample from the joint distribution of all the layers at once. Once the stack was assembled, the resulting deep network ran end-to-end at inference and was fine-tuned with ordinary backpropagation. The 2006 paper by Hinton, Osindero, and Teh that put this together is the moment most historians point to as the start of the modern deep learning era. What happened next is more important than what happened during. By around 2010 it was clear that better activation functions, principally the rectified linear unit (Nair and Hinton, 2010; Glorot, Bordes, and Bengio, 2011), and better weight initialisation (Glorot and Bengio, 2010) made the layer-by-layer pre-training step unnecessary altogether, and by 2012 AlexNet was winning ImageNet on a feedforward convolutional network trained directly by backpropagation on a pair of NVIDIA GPUs, with no Boltzmann statistics anywhere in the loop. The energy-based picture had survived just long enough to crack the deep training problem open, and then the field, having gotten what it needed from the thermal model, walked away from it. The shortcut that opened the door was the one specifically engineered not to do what the thermal model was originally for.

The deeper problem was never solved by contrastive divergence, and it has not been solved since. The whole class of sampling-based learning methods, from CD to persistent CD to parallel tempering and every successor that has been built in software or in dedicated hardware, is a sequence of statistical workarounds for one underlying gap. When nothing in the system is physically producing those correlations for you, you are stuck estimating them, and every estimator that has been tried is biased, expensive, or both. The thermal lineage as a learning method was abandoned along with everything else, and the question underneath it, of whether those correlations have to be estimated at all, was abandoned with it.

The DARPA decade

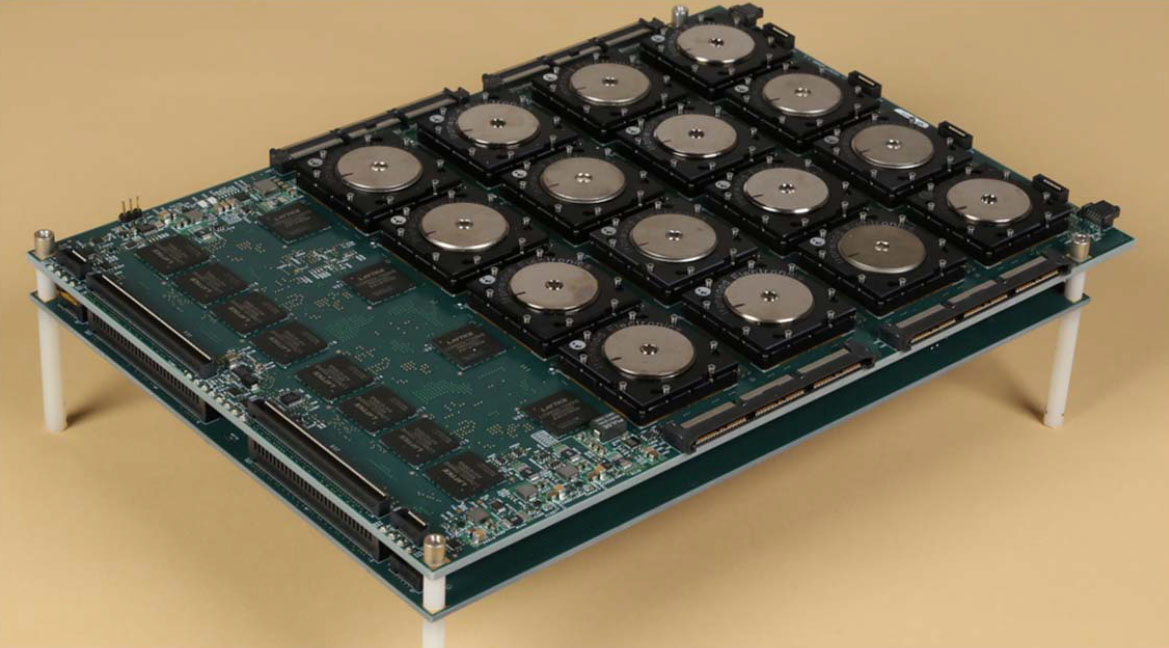

DARPA tried to save the analog track. The UPSIDE programme in the early 2010s funded HRL, Stanford, and several others to build mixed-signal neuromorphic processors. The SyNAPSE programme, also DARPA, gave IBM and HRL the contracts that produced TrueNorth, a million-neuron chip with a thousand cores demonstrated in 2014.

None of the analog or neuromorphic outputs of these programmes became commercial products. The chips worked. They were not what the customer wanted, because by 2014, two years after AlexNet had trained on a pair of NVIDIA GTX 580s and won ImageNet by a margin nobody had seen before, the customer was running Caffe or Theano on CUDA, and the entire developer surface they had built up around that stack was not going to migrate to a part with a different programming model.

Mythic AI raised more than one hundred and sixty million dollars and built something that deserves to be on the record. In September 2022 they demonstrated working analog compute-in-memory silicon running production object detection at sixty frames per second on three and a half watts. The technology was sound, the energy advantage was real, and the team had taken an idea most of the field had quietly given up on and made it run on shipped silicon. The runway closed two months later. The post-mortem from the VP of engineering was unusually candid: working chips, a software stack that no developer outside the company was set up to use, and no clear path to the workloads of the coming decade.

None of those programmes was really an alternative to the digital paradigm. They were faster substrates for its dominant operation, the matrix multiplication, and that is a different thing. Every analog compute-in-memory programme of that era did some version of the same trade: they took a probabilistic process, accepted the digital paradigm's translation of it into a tensor of weights and a stack of layers, accepted the training-by-discretised-Newton-step approximation, and then accelerated the multiply-accumulate that falls out at the end. That is a real and meaningful efficiency gain, but the mathematics being executed is still the mathematics the digital paradigm wrote down. The chip is faster at simulating the wrong kind of computation, not different in kind from the GPU it competes with.

None of those programmes failed because of physics. The physics was fine, the chips worked, the energy advantages were real, and they still failed, because each generation was an inference accelerator that depended on a digital GPU to do the training and a digital framework to express the model. Inference alone is not a moat, however efficient, and whatever lead you have at it NVIDIA closes by shipping a faster part and an updated stack. Without the training loop, and without changing what is being computed underneath, the analog substrate stays a peripheral.

The whole forty-year argument was a fight over the wrong noun. It was never the substrate. It was the loop.

The blind spot

What is worth noticing, in this longer telling, is that the missing piece across forty years of analog hardware was not the substrate. It was the loop in which the chip learns directly from its own dynamics, without external supervision and without a digital scaffolding sitting on top of it to do the work that the physics was supposed to do for free. The substrate that closes that loop was already implicit in the equations Boltzmann wrote down in 1877, in the spin-glass mathematics that Hopfield mapped onto computation in 1982, and in the analog circuit physics that Mead reduced to silicon by 1989. Three pieces of the same machine, on the table, in plain view, for forty years.

The reason they were never assembled is that the people holding the pieces did not talk to each other: statistical physicists wrote in journals neural-network researchers did not read, neural-network researchers wrote in journals circuit designers did not read, and circuit designers worked to a tape-out cadence that no academic paper has ever respected. Each community had a way of life, a set of problems, and a vocabulary that made the other two communities sound like noise. The trade got made on those terms, the world chose digital, Moore's Law made the choice cheap, and the analog programmes that survived ended up building inference accelerators that still depended on a digital GPU to do the learning, because the people who could have closed the loop were never in the same room.

The room is not empty anymore.

Why now

In October 2024 the Royal Swedish Academy of Sciences gave John Hopfield and Geoffrey Hinton the Nobel Prize in Physics for foundational discoveries that enable machine learning with artificial neural networks. The committee was not awarding backpropagation in 2024, or the convolutional network, or the transformer. It was awarding the energy-based, thermal-equilibrium lineage that began with Hopfield in 1982 and ran through the Boltzmann machine and Mead's silicon. Forty years in the wilderness, then a Nobel, with a citation that read like a verdict.

The economic pressure had been building for almost as long. Dennard scaling, the rule that let transistors get smaller, faster, and cooler at the same rate, broke around 2005, clock speeds stopped climbing, dark silicon arrived, and the free lunch that had been hiding the real cost of running a probabilistic workload on a Boolean substrate ended quietly. The field has been paying full price for two decades. Modern GPUs spend roughly eighty percent of their energy moving data, not computing with it, and the world needs about ten times more inference than the existing architecture can physically build. The cost of brute-forcing the wrong substrate, of simulating probability on transistors engineered to throw it away, is now a six-hundred-billion-dollar item for the decade and an axiomatic von Neumann tax on every model the world wants to run.

The academic case has only sharpened in parallel. Equilibrium propagation (Scellier and Bengio, 2017) is an explicit attempt to put the learning loop into a substrate whose physics does the work the digital scaffolding currently does, written in the language of variational free energy and aimed squarely at the gap the 1985 Boltzmann machine left open. Diffusion models, the engine behind every modern image and video generator, are sampling from learned probability distributions over thousands of relaxation steps, which is to say they are running Boltzmann's mathematics at hyperscaler scale on hardware that was not designed for it. Yann LeCun's group at Meta has spent the last four years working toward, in his own framing, a system whose computations live in an energy landscape rather than in a forward-pass-and-backprop pipeline. The three communities that did not used to read each other are reading each other now, because the same answer is showing up in all three vocabularies.

What was prohibitive in 1989 is routine in 2026. The mathematics is older than the GPU, the fab processes are mature, and the three communities that did not used to read each other are now writing the same answer down in three different vocabularies. The forty-year trade is closing. The only question left is who is already standing on the other side of it.

Further reading

Early computing

- Differential analyser — Vannevar Bush's mechanical analog computer at MIT, 1928–31, and its descendants.

- History of the transistor — Bell Labs, 1947.

- Analog computers — Computer History Museum — exhibits and timeline.

Neural networks meet analog silicon

- Hopfield, Neural networks and physical systems with emergent collective computational abilities, PNAS (1982). The original spin-glass network that taught the field a system can compute by relaxing.

- Boltzmann machine — Ackley, Hinton, and Sejnowski (1985), learning by sampling thermal equilibrium.

- Carver Mead and his 1989 book Analog VLSI and Neural Systems. The silicon retina (Mahowald) and silicon cochlea (Lyon and Mead) are the headline artefacts.

Neuromorphic chips, 2010s

- TrueNorth — IBM's million-neuron chip from the DARPA SyNAPSE programme, 2014.

The thermal track returns

- The 2024 Nobel Prize in Physics — awarded to John Hopfield and Geoffrey Hinton for the energy-based learning lineage that begins with Hopfield (1982) and the Boltzmann machine (1985). The Royal Swedish Academy's official citation is the cleanest validation of the thermal track on the record.

- Scellier and Bengio, Equilibrium propagation: bridging the gap between energy-based models and backpropagation (2017). The on-substrate learning rule, written in the language of variational free energy.

- LeCun, A Path Towards Autonomous Machine Intelligence (2022). The position paper that names the destination from the machine-learning side.